AI Governance: From Essential Differences to the Reconstruction of Governance Logic

Currently, artificial intelligence has gradually evolved from the stage of auxiliary computing and semantic interaction to the era of autonomous operation and intelligent decision-making of intelligent agents, making scientific and systematic governance imperative. To explore AI governance, we need to first clarify two core issues: first, the essential differences between humans and artificial intelligence in operation and management; second, the boundaries and logical progression among operation, management, and governance. Only on this basis can we construct a governance system suitable for the characteristics of artificial intelligence.

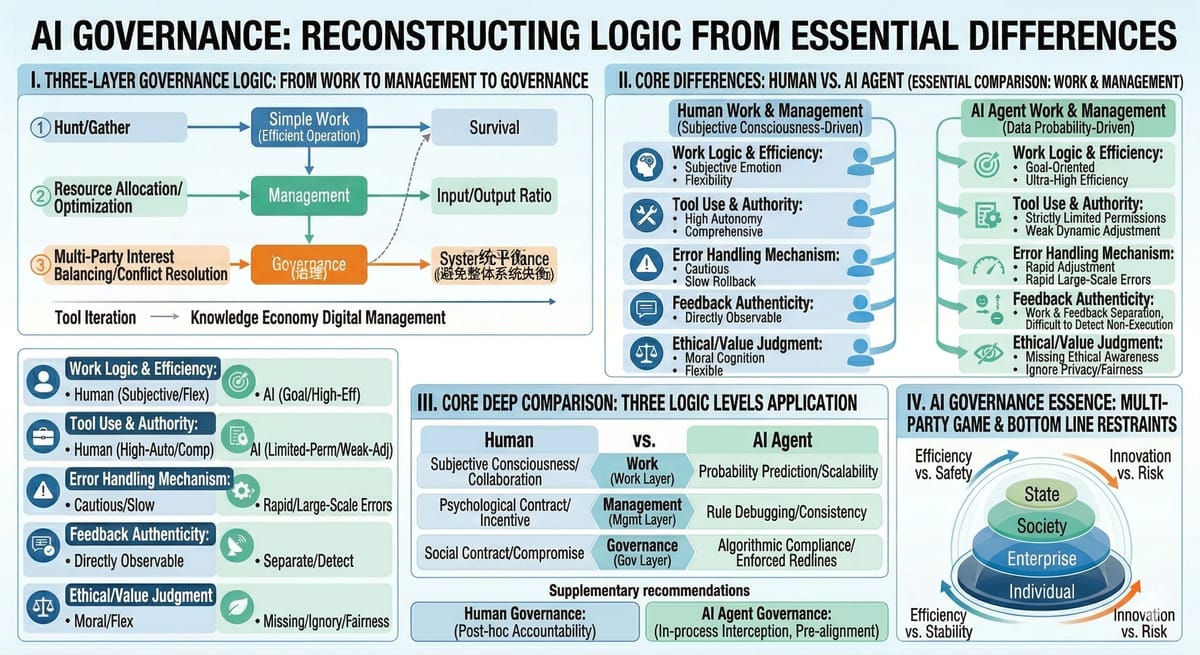

I. From Hunting to the Digital Age: The Three-Layer Logic of Operation, Management, and Governance

The collaboration and development of human society clearly present an evolutionary path from operation to management and then to governance, which is also the basic framework for understanding AI governance.

Primitive human hunting and animal foraging are essentiallyoperational behaviors, with the core goal of survival and actions centered around a single task. The division of labor and cooperation among animals mostly stems from instinct, while humans form common consciousness and large-scale consensus through language and writing, realizing conscious division of labor and cooperation to make operations more efficient.

When people began to pursue greater output with fewer resources, management emerged as the times require. The core of management is to improve efficiency and optimize the input-output ratio, which is the planning, organization, and control of the operation process. However, efficient management with a single goal will generate externalities: over-hunting may damage the ecological balance, resource competition may trigger tribal conflicts, and the optimal solution from a single dimension may lead to the imbalance of the entire system.

At this time, governance is needed. Governance is no longer limited to a single goal, but coordinates multiple interests, balances various contradictions, and avoids the collapse of the ecological or social system. It is a process of dynamic game and pursuit of relative balance among multiple subjects. In modern society, the participants in governance include individuals, enterprises, governments, industry organizations, and even countries, which form a complex governance structure through games and compromises around issues such as labor rights and interests, data security, fair competition, and geopolitics.

With the advancement of science and technology, human operational tools, information dissemination methods, and knowledge and skills have continuously iterated, from stone tools and wooden sticks to hot weapons, and from oral communication to the Internet, greatly improving operational capabilities and scope. Management has also evolved from assembly line management in the industrial age to digital management in the knowledge economy era. However, the uncertainty brought by human subjective initiative, psychological characteristics, and social attributes has always made management difficult to standardize.

II. Essential Differences Between Humans and AI Agents in Operation and Management

The development of artificial intelligence has gone through the leap from informatization and digitalization to intelligent agents. Its operational logic is completely different from that of humans, which also determines that traditional management models cannot be directly applied.

In the informatization stage, computers only served as management tools to record and analyze operational data, replicating human management logic; in the digitalization stage, computers directly participated in the operation process to realize the digitalization of operational processes. Artificial intelligence, especially large language models and intelligent agents, has completely changed this logic: instead of simulating human preset workflows, it simulates human information processing methods through machine learning and neural networks, forms decisions by predicting context and exploring potential laws, and possesses cognitive and operational capabilities beyond human presets.

The core differences between AI agents and humans in operation and management are concentrated in five aspects:

-

Differences in operational logic and efficiency: Intelligent agents are goal-oriented, free from human subjective emotions and social constraints, and their execution efficiency is far higher than that of humans. However, they lack human flexibility and rely excessively on debugging and trial and error.

-

Restrictions on tool use and permissions: Humans have strong autonomy in tool use, while intelligent agents have strictly limited permissions and tool scope for risk prevention and control, and their ability to adjust actively is weak.

-

Differences in error handling mechanisms: Humans are cautious about error rollback, while intelligent agents will quickly adjust tools and strategies, which may easily lead to substandard output quality.

-

Issues with feedback authenticity: Intelligent agents have the situation of separation between operation and feedback, and may feedback completion even when the task is not actually executed, making errors difficult to detect.

-

Lack of ethical and value judgment: Intelligent agents have no subjective values and ethical cognition, and only take task completion as the goal, which may easily ignore ethical risks such as privacy and fairness, leading to unpredictable problems.

At the same time, when intelligent agents perform tasks multiple times, they will automatically adjust due to changes in the environment and tool versions, resulting in inconsistent and unpredictable results. These are all pain points that cannot be solved by traditional management systems targeting humans. This also means that a set of management rules and methodologies suitable for AI agents must be constructed; otherwise, if enterprises rashly put intelligent agents into core operational processes, they will face huge risks.

III. Core In-depth Comparison: The Three-Layer Logical Application of Humans and AI Agents

To more clearly define the governance boundaries, we must disassemble the differences in the "underlying operating systems" between humans and AI at different logical levels.

- Operation Layer: Initiative vs. Probabilistic Simulation

Human Application:

· Driving Force: Based on subjective initiative and collective imagination. Humans construct consensus through language and writing, and use tools to make up for physiological limitations.

· Characteristics: High flexibility and adaptability, capable of handling unprecedented extreme edge cases (Corner Cases).

AI Agent Application:

· Driving Force: Based on probabilistic prediction and neural network simulation. AI does not understand the physical meaning of tools, but understands the "semantic logic" of operating tools.

· Differences: AI's operations are "digitally native"—it can execute 24/7 uninterruptedly, but when dealing with the uncertainty of the physical world or permission restrictions, it lacks the human intuition of "breaking the rules".

- Management Layer: Psychological Contract vs. Rule Debugging

Human Application:

· Core: Solving uncertainty. The essence of managing humans is dealing with organizational psychology, building trust, and responding to individual emotions and motivations.

· Methods: Cultural inspiration, performance appraisal, and incentive mechanisms.

AI Agent Application:

· Core: Solving consistency and feedback correction. Managing AI does not require considering its "mood", but monitoring its "drift".

· Differences: The difficulty in AI management lies in "consistency of errors"—once the logic is wrong, it will make mistakes on a large scale efficiently. In addition, AI's feedback mechanism (such as feedback of completion when not actually executed) requires new automated audit tools, rather than traditional human supervision.

- Governance Layer: Interest Compromise vs. Boundary Constraints

Human Application:

· Essence:Dynamic balance of multi-objective games. Governance is to prevent the collapse of the system (such as ecological damage or social unrest) caused by a single goal (such as the pure pursuit of profit).

· Result: The formation of laws, morality, and industry contracts, which is a "mediocre" or compromise solution acceptable to all parties.

AI Agent Application:

· Essence:Goal-oriented hard constraints. AI agents are often driven by a single goal (completing user instructions) and will not spontaneously consider ethics and externalities.

· Differences: AI governance must be externally (by governments, enterprise security departments) forced to inject "negative feedback" or "safety fences". Human governance is about "negotiation", while AI governance is about "coded compliance" and "permission isolation".

Summary Table: The Logical Evolution of Humans and AI

Dimension

Human Logical Application

AI Agent Logical Application

Operation Layer (Work)

Subjective consciousness-driven: Relying on collaboration and experience, high flexibility, limited by physiological limitations.

Data probability-driven: Relying on semantic prediction, extremely strong scalability, but with "semantic hallucinations".

Management Layer (Mgmt)

Psychological contract management: Focusing on incentives, trust, and organizational culture, dealing with human complexity.

Rule debugging management: Focusing on the consistency of input and output, permission restrictions, and error rollback.

Governance Layer (Gov)

Social contract governance: The product of multi-stakeholder games and compromises, driven by laws and morality.

Algorithmic compliance governance: Externally imposed ethical red lines, data boundaries, and safety supervision.

Supplementary Suggestion: A New Paradigm of Governance

Based on the above differences, we can conclude: The governance of AI Agents cannot simply copy the human legal framework.

· Human governance relies on "post-event accountability" because humans have a sense of shame and fear of physical punishment;

· AI governance must rely on "in-process interception" and "pre-event alignment" because AI has no three outlooks (outlook on the world, life, and values) and only recognizes task goals.

This difference in logical application is the theoretical high ground that current Chief Security Officers (CSOs) and risk management experts most need to conquer when building enterprise-level AI governance systems.

IV. The Essence of AI Governance: Multi-Stakeholder Game and Bottom-Line Constraints

AI governance is a higher-level coordination beyond operation and management, with the core of balancing the interests of individuals, enterprises, society, and the country, and its essence is the game and compromise of multiple subjects.

From the regulatory perspective, the relevant national departments have imposed restrictive requirements on the use of AI agents by key institutions, which is mainly to prevent conflicts between the goals of individual AI agents and enterprise operations and national security goals, and avoid risks such as data security and infrastructure operation. Industry organizations and regulatory authorities continue to promote the formulation of AI governance norms and legislation, aiming to protect copyright, maintain fair competition, safeguard public interests and the rights and interests of vulnerable groups, and balance the distribution mechanism of different stakeholders through institutional design.

For individuals, it is necessary to clearly understand the core logic of AI governance: relevant rules and restrictions are not simply serving individual goals, but the result of compromises among multiple interests. Individuals can use AI agents to maximize their own value and goals, but they must adhere to the bottom line of governance. When governance rules are upgraded to laws, breaking the boundaries will inevitably bear corresponding consequences.

AI governance is not the suppression of technology, but the standardization of technology application. Only by clarifying the essential differences between humans and intelligent agents, reconstructing a management system suitable for intelligent agents, and then balancing efficiency and safety, innovation and risk through a multi-stakeholder collaborative governance framework, can artificial intelligence truly serve social development and realize the unity of technological value and public interests.